3. How do Computer Clocks work?

Note: XXX Note from the editor: This part is still incomplete!

3.1. Bits and Registers

Computers are good in adding bits. Therefore time is stored in a number of bits, and adding to these bits makes the time go on. The meaning of the value "zero" has to be defined separately (Usually this is called the epoch).

Using more bits can widen the range of the time value, or it can increase the resolution of the stored time.

Example 2. Range and Resolution

Assume we use 8 bits to store a time stamp. There can be 256 different values then. If we choose to store seconds, our resolution is one second, and the range is from 0 to 255 seconds. If we prefer to store the time in minutes, we can store up to 255 minutes there.

With 64 bits you could have nanosecond resolution while still having a range significantly longer than your life.

3.2. Making Time go on

As seen before, the number of bits together with a definition of resolution and epoch are used to store time stamps. For a real clock, time must advance automatically.

Obviously a defined resolution of nanoseconds is useless if the value is updated once per minute. If you are still not convinced, consider reading such a clock three times a minute, and compare the time you would get.

So we want a frequent update of the time bits. In most cases such an update is done in an interrupt service routine, and the interrupt is triggered by a programmable timer chip. Unfortunately updating the clock bits compared to generating a timer interrupt is slow (After all, most processors have other work to do as well). Popular values for the interrupt frequency are 18.2, 50, 60, and 100Hz. DEC Alpha machines typically use 1024Hz.

Because of the speed requirement, most time bits use a linear time scale like seconds (instead of dealing with seconds, minutes, hours, days, etc.). Only if a human is in need of the current time, the time stamp is read and converted.

In theory the mathematics to update the clock are easy: If you have two interrupts per hour, just add 30 minutes every interrupt; if you have 100 interrupts per second, simply add 10ms per interrupt. In the popular UNIX clock model the units in the time bits are microseconds, and the increase per interrupt is "1000000 / HZ" ("HZ" is the interrupt frequency).[1] The value added every timer interrupt is frequently referred to as tick.

3.3. Clock Quality

When discussing clocks, the following quality factors are quite helpful:

The smallest possible increase of time the clock model allows is called resolution. If your clock increments its value only once per second, your resolution is also one second.

A high resolution does not help you anything if you can't read the clock. Therefore the smallest possible increase of time that can be experienced by a program is called precision.

In NTP precision is determined automatically, and it is measured as a power of two. For example when ntpq -c rl prints precision=-16, the precision is about 15 microseconds.

If you like formal definitions, consider this one: "Precision is the random uncertainty of a measured value, expressed by the standard deviation or by a multiple of the standard deviation."

When repeatedly reading the time, the difference may vary almost randomly. The difference of these differences (second derivation) is called jitter.

A clock not only needs to be read, it must be set, too. The accuracy determines how close the clock is to an official time reference like UTC.

Again, if you prefer a formal definition: "Accuracy is the closeness of the agreement between the result of a measurement and a true value of the measurand."

Unfortunately all the common clock hardware is not very accurate. This is simply because the frequency that makes time increase is never exactly right. Even an error of only 0.001% would make a clock be off by almost one second per day. This is also a reason why discussing clock problems uses very fine measures: One PPM (Part Per Million) is 0.0001% (1E-6).

Real clocks have a frequency error of several PPM quite frequently. Some of the best clocks available still have errors of about 1E-8 PPM (For one of the clocks that is behind the German DCF77 the stability is told to be 1.5 ns/day (1.7E-8 PPM). See http://www.ptb.de/english/org/4/43/432/real.htm).

Even if the systematic error of some clock model is known, the clock will never be perfect. This is because the frequency varies over time, mostly influenced by temperature, but it could also be air pressure or magnetic fields, etc. Reliability determines the time a clock can keep the time within a specified accuracy.

For long-term observation one may also notice variations in the clock frequency. The difference of the frequency is called wander. Therefore there can be clocks with poor short-term stability, but with good long-term stability, and vice versa.

3.3.1. Frequency Error

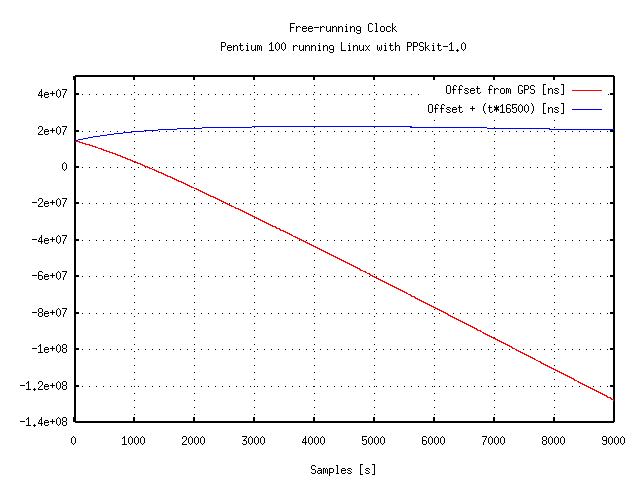

As explained before, it's not sufficient to correct the clock once. To illustrate the problem, have a look at Figure 1. The offset of a precision reference pulse has been measured with the free-running system clock. The figure shows that the system clock gains about 50 milliseconds per hour (red line). Even if the frequency error is taken into account, the error spans a few milliseconds within a few hours (blue line).

Figure 1. Offset for a free-running Clock

Even if the offset seems to drift away in a linear way, a closer examination reveals that the drift is not linear.

Example 3. Quartz Oscillators in IBM compatible PCs

In my experiments with PCs running Linux I found out that the frequency of the oscillator's correction value increases by about 11 PPM after powering up the system. This is quite likely due to the increase of temperature. A typical quartz is expected to drift about 1 PPM per °C.

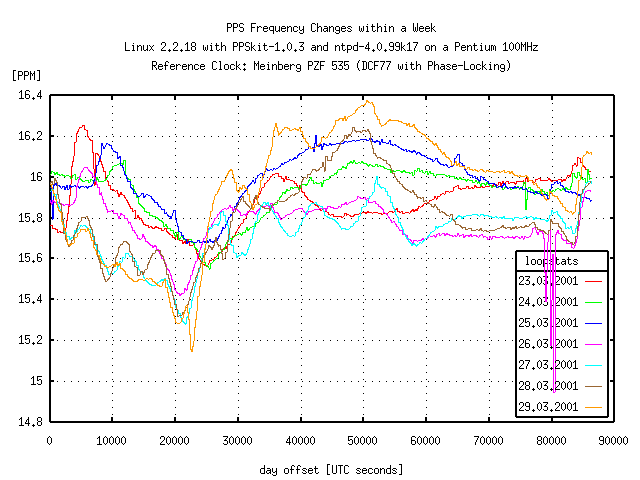

Even for a system that has been running for several days in a non-air-conditioned office, the correction value changed by more than 1 PPM within a week (See Figure 2 for a snapshot from that machine). It is possible that a change in supply voltage also changes the drift value of the quartz.

As a consequence, without continuous adjustments the clock must be expected to drift away at roughly one second per day in the worst case. Even worse, the values quoted above may increase significantly for other circuits, or even more for extreme environmental conditions.

Figure 2. Frequency Correction within a Week

Some spikes may be due to the fact that the DCF77 signal failed several times during the observation, causing the receiver to resynchronize with an unknown phase.

- 3.3.1.1. How bad is a Frequency Error of 500 PPM?

- 3.3.1.2. What is the Frequency Error of a good Clock?

As most people have some trouble with that abstract PPM (parts per million, 0.0001%), I'll simply state that 12 PPM correspond to one second per day roughly. So 500 PPM mean the clock is off by about 43 seconds per day. Only poor old mechanical wristwatches are worse.

I'm not sure, but but I think a chronometer is allowed to drift mostly by six seconds a day when the temperature doesn't change by more than 15° Celsius from room temperature. That corresponds to a frequency error of 69 PPM.

I read about a temperature compensated quartz that should guarantee a clock error of less than 15 seconds per year, but I think they were actually talking about the frequency variation instead of absolute frequency error. In any case that would be 0.47 PPM. As I actually own a wrist watch that should include that quartz, I can state that the absolute frequency error is about 2.78 PPM, or 6 seconds in 25 days.

For the Meinberg GPS 167 the frequency error of the free running oven-controlled quartz is specified as 0.5 PPM after one year, or 43 milliseconds per year.