Hierarchical Belief Propagation on the GPU for Stereo and Motion

Scott Grauer-Gray and Chandra Kambhamettu

The global belief propagation algorithm is known to generate good results for stereo and potentially for motion, but is limited by high storage requirements and a longer run-time than other methods. We introduce a hierarchical belief propagation scheme in which rough results computed at higher levels are fine-tuned at lower levels to reduce the storage requirements and running time, in the process making belief propagation feasible on particular stereo sets and image sequences where the search space is so large that it is not possible to run traditional belief propagation due to storage requirements.

The algorithm is implemented on the graphics processing unit (GPU) using CUDA to utilize the parallel processing power of the device to further reduce the run-time and also to take advantage of the built-in interpolation capabilities of the GPU to retrieve results at sub-pixel accuracy with no change of implementation. The code which generates the optimal results in the WACV publication listed below is available under “Software”.

Figure 1 shows the output disparity map when running our implementation for stereo using a single level with a disparity increment of 0.5, showing our implementation’s capability to retrieve results at sub-pixel accuracy.

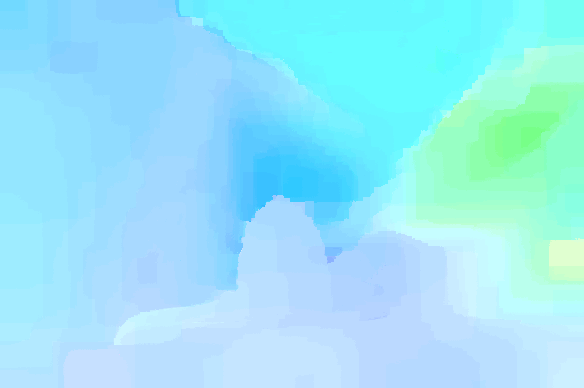

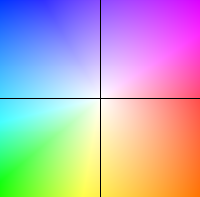

Figure 2 shows the output motion estimation when running our implementation using five hierarchical levels, along with a legend showing the motion corresponding to each color.

The Venus stereo set and Dimetrodon image sequence used in the experiments shown in the figures are from the Middlebury datasets available at http://vision.middlebury.edu/.

|

|

Figure 1: (Left to right) Venus stereo set and output disparity map using our implementation with a disparity increment of 0.5.

|

|

|

Figure 2: (Left to right) Dimetrodon image sequence, output motion estimation image using our implementation with 5 hierarchical levels, and legend showing motion/color correspondence in motion estimation image.

Software

Related publications

- Scott Grauer-Gray, Chandra Kambhamettu. Hierarchical Belief Propagation To Reduce Search Space Using CUDA for Stereo and Motion Estimation. IEEE Computer Society's Workshop on Applications of Computer Vision (WACV) 2009.

Acknowledgement

- This work was partially supported by a U.S National Aeronautics and Space Administration award NASA NNX08AD80G under the ROSES Applied Information Systems Research program.